Documentation Index

Fetch the complete documentation index at: https://docs.rime.ai/llms.txt

Use this file to discover all available pages before exploring further.

Introduction

Why on-premises?

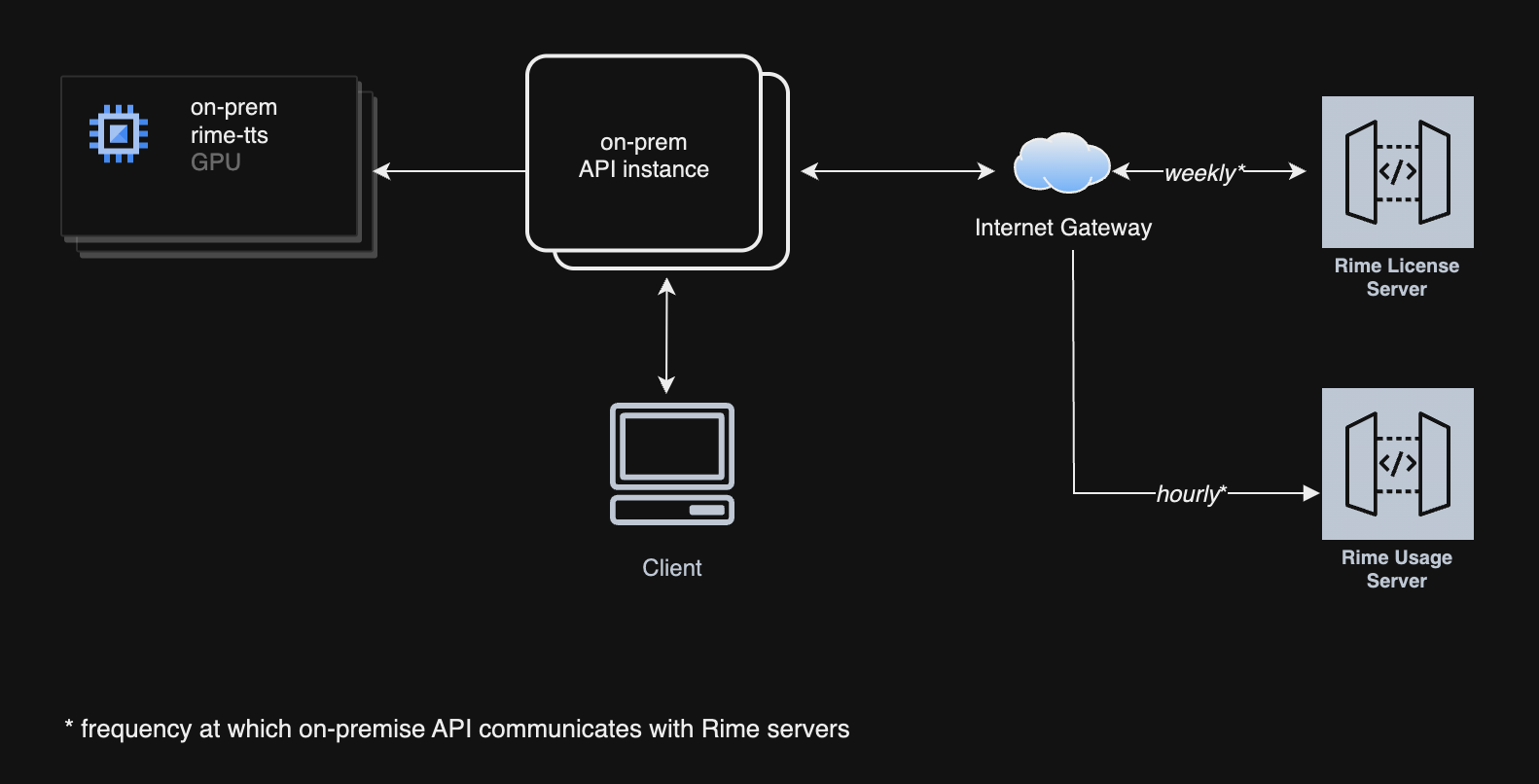

Deploying on-premises offers several advantages over using cloud APIs over a public network. One of the main benefits is speed; by hosting the services locally, you can significantly reduce network latency, resulting in faster system responses and data processing.Security

With an on-premises deployment, all sensitive data remains within your corporate network, ensuring enhanced security as it is not transmitted over the Internet. This setup helps in complying with strict data privacy and protection regulations.Performance

Latency

- Coda: Available on-premises. Setup details and performance numbers coming in a follow-up release.

- Mist v2: Our tests have shown median latency of 175ms with randomly generated sentences between 40 and 50 characters on A10Gs and similar GPUs.

- Arcana: See performance tuning.

Components

Prerequisites

Hardware requirements

- GPU

- For Mist

- NVIDIA T4, L4, A10, or higher

- For Arcana

- NVIDIA A100, H100 MIG

3g.40gb, or higher

- NVIDIA A100, H100 MIG

- For Mist

- Storage

- 50 GB storage

- CPU

- 8 vCPUs

- Memory requirements

- 32 GiB

Software requirements

- Supported Linux Distributions

- Debian 12 (

bookworm), x86_64 - Ubuntu Server 24.04 (

jammy), x86_64

- Debian 12 (

- NVIDIA drivers

- Minimum:

525.60.13 - Recommended:

570.133.20or higher

- Minimum:

- Docker

- NVIDIA Container Toolkit

Installations

NVIDIA drivers

Follow https://www.nvidia.com/en-us/drivers to install the latest NVIDIA drivers, or use the following instructions on Debian-based systems:NVIDIA Driver Installation (Debian-based)

Docker

Follow https://docs.docker.com/engine/install to install Docker on your system. Optionally, add the current user to thedocker group for convenience: https://docs.docker.com/engine/install/linux-postinstall.

The code snippets below assume that you can run docker as the current login.

NVIDIA Container Toolkit

Follow https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/latest/install-guide.html to install the NVIDIA Container Toolkit. Note that you should follow both the Installation and the Configuration sections.Verification

To verify that you have all the prerequisites installed, run the following command:Verify Prerequisites

Firewall requirements

The Rime API instance will listen on port 8000 for HTTP traffic, and on port 8001 for WebSocket traffic. You will also need to allow the following outbound traffic in your firewall rules:https://optimize.rime.ai/usage: registers on-prem usage with our servers.https://optimize.rime.ai/license: verifies that your on-prem license is active.us-docker.pkg.devon port 443: container image registry.

Self-service licensing and credentials

API key Generation

Refer to our user interface dashboard to generate the necessary keys and credentials for authenticating and authorizing the deployment and use of our services.Deployment

The deployment consists of two services, each powered by a container image:- API service: responsible for handling the HTTP and WebSocket requests, and for verifying the license. It serves as a proxy to the TTS service.

- TTS service: responsible for model inference.

Artifact Registry login

Key file to be provided by Rime.Log in to Artifact Registry

Container images

TTS service

Arcana

The Arcana images can be found atus-docker.pkg.dev/rime-labs/arcana/v2/<language>:<tag>.

- The support languages are:

en,es,fr,de,si. - The latest version is

20260420.

Arcana v3 (multilingual)

The Arcana v3 images can be found atus-docker.pkg.dev/rime-labs/arcana/v3/ennea:<tag>.

- The support languages are:

en,es,fr,pt,de,ja,ta,si,he. - The latest version is

20260420.

us-docker.pkg.dev/rime-labs/engine/arcana:<tag>us-docker.pkg.dev/rime-labs/package/arcana/<language>:<tag>

Coda (multilingual)

The Coda v1 images can be found atus-docker.pkg.dev/rime-labs/coda/v1/coda:<tag>.

- The support languages are:

en,es,fr,pt,de,ja. - The latest version is

20260517.

Mist v3 (multilingual)

The Mist v3 images can be found atus-docker.pkg.dev/rime-labs/mist/v3/omni:<tag>

- The support languages are:

de,en,es,fr. - The latest version is

20260420.

API service

The latest image version is:us-docker.pkg.dev/rime-labs/api/service:20260424

Docker Compose configuration

A simple way of deploying on a machine is to use Docker Compose. Create acompose.yml file with your editor of choice to define the services and their configurations:

compose.yml

When running on Kubernetes, ensure thatMODEL_URLpoints tohttp://0.0.0.0:8080/invocationsinstead of the Docker Compose service name.

Multi-model backend

If you want to serve multiple Arcana languages via a single API instance, you can create acompose.yml like the following:

compose.yml

ARCANA_{LANG}_MODEL_URL environment variable must point to the container running the Arcana image for that language,

but you should still point MODEL_URL to a default model container. The model environment variables currently supported are:

Authentication configuration

By default, callers must pass their Rime API key in every request via theAuthorization: Bearer <key> header. Two additional environment variables let you configure authentication at the deployment level instead.

Pre-configuring the API key (RIME_API_KEY)

If you set RIME_API_KEY, the API service will use it to authenticate with the Rime license server automatically, and callers do not need to include an API key in their requests.

You can supply it as an environment variable:

compose.yml

/secrets/rime_api_key inside the container:

compose.yml

Authorization header pathway remains active as normal.

Alternate API key header (API_KEY_HEADER)

On platforms that intercept the Authorization header, set API_KEY_HEADER to the name of an alternate header that callers will use to pass their Rime API key:

compose.yml

Platform API key (PLATFORM_API_KEY)

On platforms that require authenticated inter-container requests, set PLATFORM_API_KEY so the API service can reach the model backend. It can also be mounted as a secret at /secrets/platform_api_key:

compose.yml

Start Docker Compose

Start Docker Compose

Deployment steps

- Environment setup: Prepare your AWS environment according to the specifications required for optimal deployment.

- Service deployment: Using Docker, deploy the images on your server.

- Networking setup: Configure the network settings, including the Internet Gateway and port settings, to ensure connectivity and security.

- Licensing and authentication: Generate and apply the necessary API key via our dashboard to start using the services.

Note: Once the containers are started, expect a five-minute delay for warm-up before sending the first TTS requests.

Additional information

- Troubleshooting guide: A troubleshooting guide will be provided to help resolve common issues during deployment.

- Available voices and models: All voices are currently available.

Requests and response formats

HTTP requests

Request:Health check

Request example

Response format

result.txt

Receiving a response in MP3 format

Request:Request example

result.mp3

Receiving a response in PCM (raw) format

Request:Request example

result.pcm

WebSocket endpoints

JSON websockets

The JSON WebSocket endpoint compatible with botharcana models as well as mist will be served at port 8003. For example, ws://localhost:8003, which will be equivalent to our [cloud websockets-json API.

See the arcana json websockets docs and the mist json websockets docs depending

on which model backend you have configured.

Non-JSON websockets

The non-JSON WebSocket endpoint will be served at port8002. For example, ws://localhost:8002, which will be equivalent to our cloud websockets-json API.

`

Deprecated

A deprecated websockets endpoint will be served on port8001 that is only compatible with the mist model lines, and is equivalent to our cloud websockets-json API.